How DeepMind’s Memory Trick Helps AI Learn Faster

Intelligent machines have humans in their sights. Deep-learning machines already have superhuman skills when it comes to tasks such as face recognition, video-game playing, and even the ancient Chinese game of Go. So it’s easy to think that humans are already outgunned.

But not so fast. Intelligent machines still lag behind humans in one crucial area of performance: the speed at which they learn. When it comes to mastering classic video games, for example, the best deep-learning machines take some 200 hours of play to reach the same skill levels that humans achieve in just two hours.

So computer scientists would dearly love to have some way to speed up the rate at which machines learn.

Today, Alexander Pritzel and pals at Google’s DeepMind subsidiary in London claim to have done just that. These guys have built a deep-learning machine that is capable of rapidly assimilating new experiences and then acting on them. The result is a machine that learns significantly faster than others and has the potential to match humans in the not too distant future.

First, some background. Deep learning uses layers of neural networks to look for patterns in data. When a single layer spots a pattern it recognizes, it sends this information to the next layer, which looks for patterns in this signal, and so on.

So in face recognition, one layer might look for edges in an image, the next layer for circular patterns of edges (the kind that eyes and mouths make), and the next for triangular patterns such as those made by two eyes and a mouth. When all this happens, the final output is an indication that a face has been spotted.

Of course, the devil is in the details. There are various systems of feedback to allow the system to learn by adjusting various internal parameters such as the strength of connections between layers. These parameters must change slowly, since a big change in one layer can catastrophically affect learning in the subsequent layers. That’s why deep neural networks need so much training and why it takes so long.

Pritzel and co have tackled this problem with a technique they call neural episodic control. “Neural episodic control demonstrates dramatic improvements on the speed of learning for a wide range of environments,” they say. “Critically, our agent is able to rapidly latch onto highly successful strategies as soon as they are experienced, instead of waiting for many steps of optimisation.”

The basic idea behind DeepMind’s approach is to copy the way humans and animals learn quickly. The general consensus is that humans can tackle situations in two different ways. If the situation is familiar, our brains have already formed a model of it, which they use to work out how best to behave. This uses a part of the brain called the prefrontal cortex.

But when the situation is not familiar, our brains have to fall back on another strategy. This is thought to involve a much simpler test-and-remember approach involving the hippocampus. So we try something and remember the outcome of this episode. If it is successful, we try it again, and so on. But if it is not a successful episode, we try to avoid it in future.

This episodic approach suffices in the short term while our prefrontal brain learns. But it is soon outperformed by the prefrontal cortex and its model-based approach.

Pritzel and co have used this approach as their inspiration. Their new system has two approaches. The first is a conventional deep-learning system that mimics the behaviur of the prefrontal cortex. The second is more like the hippocampus. When the system tries something new, it remembers the outcome.

But crucially, it doesn’t try to learn what to remember. Instead, it remembers everything. “Our architecture does not try to learn when to write to memory, as this can be slow to learn and take a significant amount of time,” say Pritzel and co. “Instead, we elect to write all experiences to the memory, and allow it to grow very large compared to existing memory architectures.”

They then use a set of strategies to read from this large memory quickly. The result is that the system can latch onto successful strategies much more quickly than conventional deep-learning systems.

They go on to demonstrate how well all this works by training their machine to play classic Atari video games, such as Breakout, Pong, and Space Invaders. (This is a playground that DeepMind has used to train many deep-learning machines.)

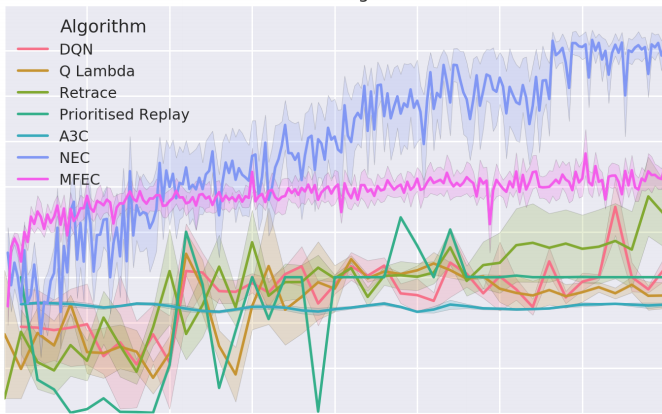

The team, which includes DeepMind cofounder Demis Hassibis, shows that neural episodic control vastly outperforms other deep-learning approaches in the speed at which it learns. “Our experiments show that neural episodic control requires an order of magnitude fewer interactions with the environment,” they say.

That’s impressive work with significant potential. The researchers say that an obvious extension of this work is to test their new approach on more complex 3-D environments.

It’ll be interesting to see what environments the team chooses and the impact this will have on the real world. We’ll look forward to seeing how that works out.

Ref: Neural Episodic Control : arxiv.org/abs/1703.01988

Deep Dive

Artificial intelligence

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

What’s next for generative video

OpenAI's Sora has raised the bar for AI moviemaking. Here are four things to bear in mind as we wrap our heads around what's coming.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.